Organizations as Code: The Company Becomes a Repo

In March 2026, Andrej Karpathy open-sourced a 630-line Python repository called Autoresearch. One human-maintained Markdown file. One immutable evaluation harness. One mutable training script. An agent ran 50 machine-learning experiments overnight on a single GPU without a single human intervention. When Tobi Lütke pointed it at a Shopify problem, it produced a 19% model-quality improvement after 37 sequential experiments in 8 hours.

That repo is not a research toy.

It is the smallest viable proof of a much larger claim: that the next thing to make reproducible is not the model and not the agent — it is the organization that coordinates them.

The roles, goals, permissions, budgets, workflows, escalation paths, memories, evals, and governance of a company are about to become versioned, executable configuration. Not an org chart. Not a strategy memo. The runtime.

Karpathy calls this “Org Engineering”. I am going to call the artifact what it is.

The company becomes a repo.

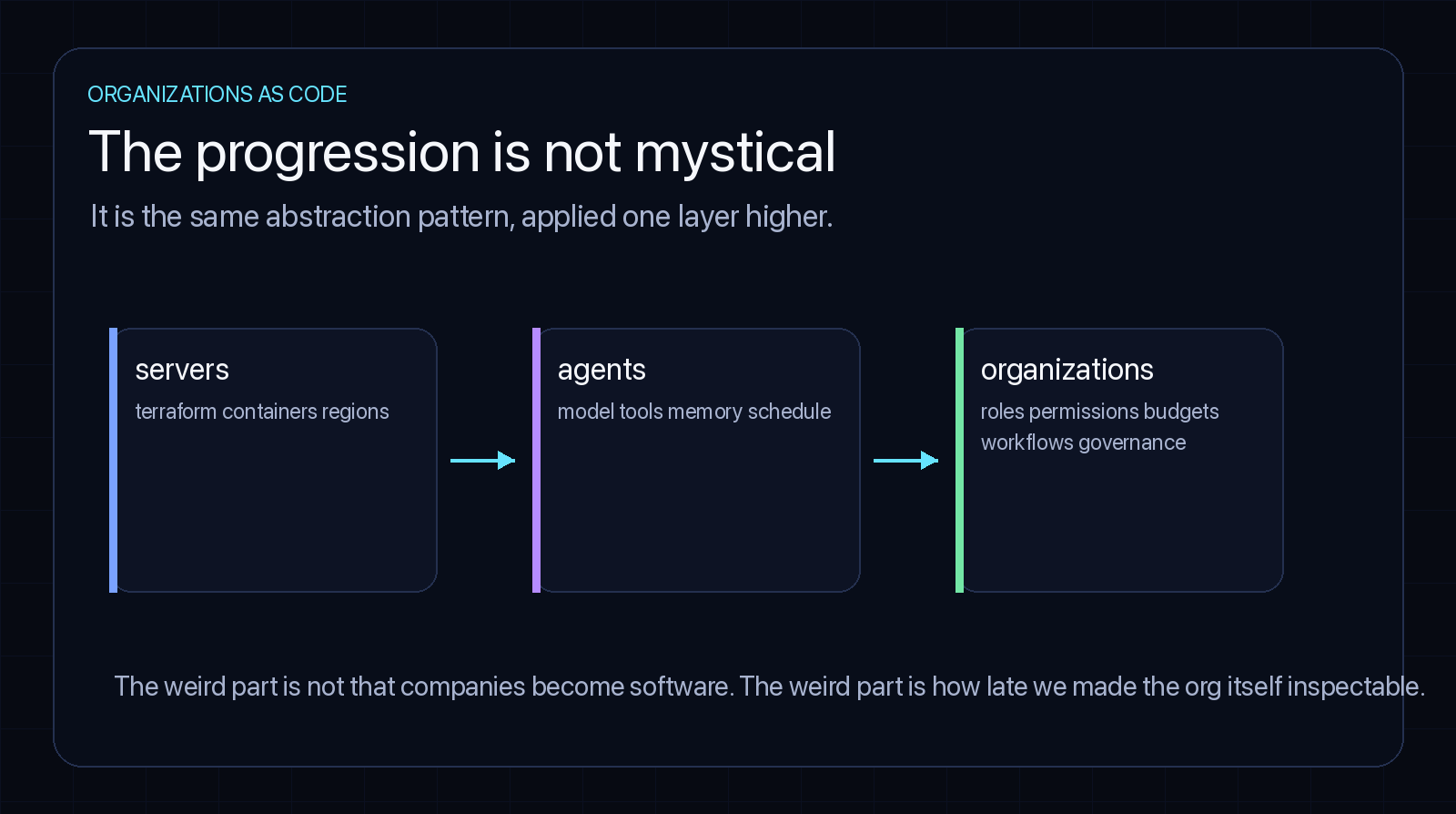

1. The progression is boring, which is why I trust it

The argument is not “AI will magically create companies.”

The argument is more mechanical:

| Layer | Before | After |

|---|---|---|

| servers | hand-configured machines | Terraform, containers, regions, reproducible deploys |

| agents | chat prompts | model + tools + permissions + memory + schedules + evals |

| organizations | people, meetings, folklore, docs | roles + goals + budgets + workflows + governance as runtime config |

That third row sounds weird only because we are used to organizations being implicit.

But a company is mostly coordination. It decides what should happen, who can do it, what tools they can use, how much money they can spend, when to ask for approval, where to write down the result, and how to learn from the outcome.

That is not mystical. It is a state machine with politics.

The politics will not disappear. The state machine will become explicit.

The proof points are not subtle. Stripe is merging more than 1,000 AI-generated pull requests per week through structured agentic pipelines. Zapier reports an 89% AI-adoption rate across its operational workflow. Karpathy himself ran an eight-agent simulated research org — four Claude agents and four Codex agents, each on a Git branch, communicating via text-file diffs in a single repo, attempting to remove a logit softcap from nanochat without regression. No virtual machines. No Docker mesh. No proprietary protocol. Just the filesystem and Git.

This is what “organization” looks like when its substrate is a repo.

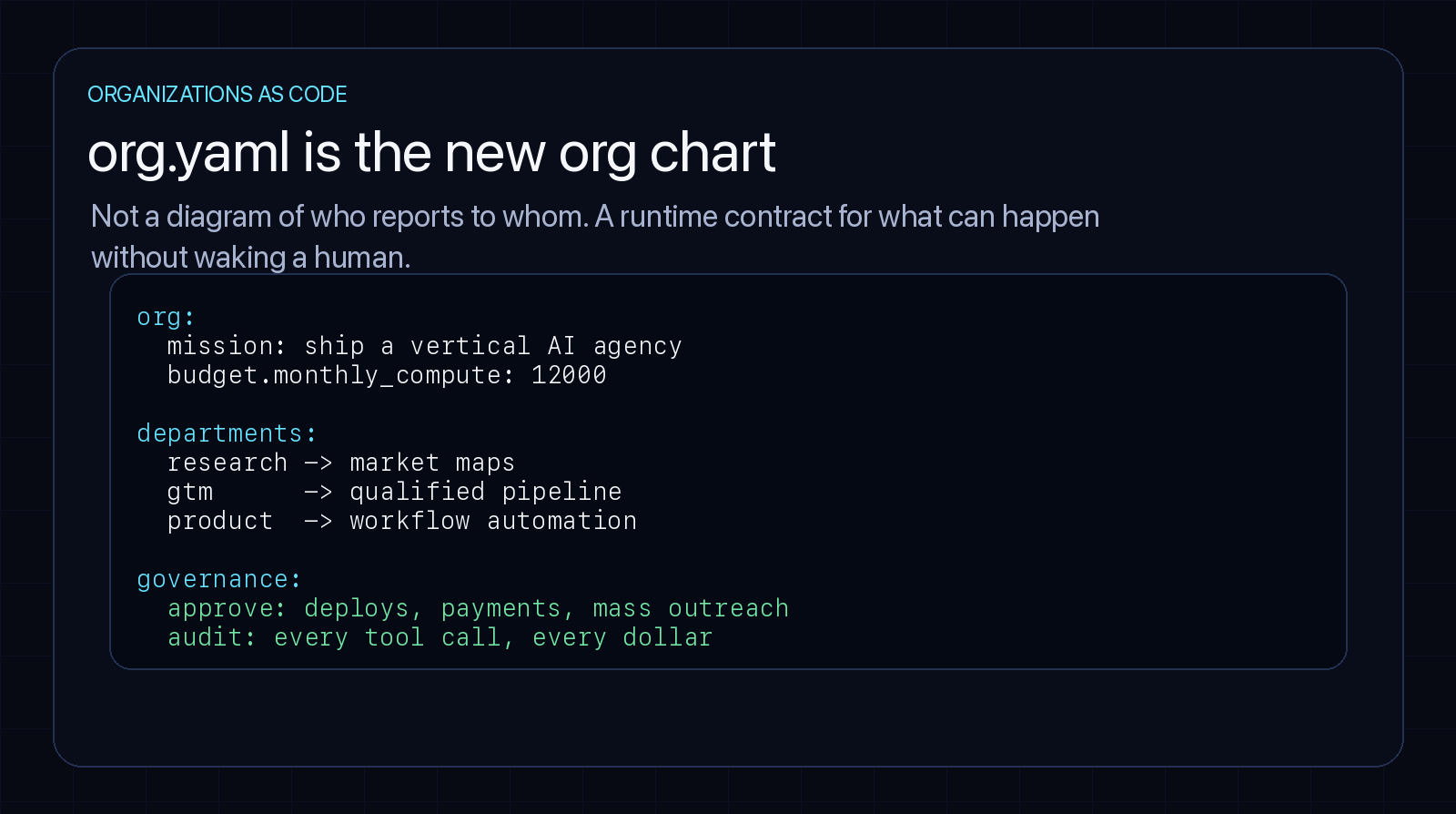

2. org.yaml is the new org chart

The first version will look almost disappointingly simple.

org:

name: acme-growth-lab

mission: grow revenue for vertical SaaS companies using AI-native outbound

budget:

monthly_compute: 12000

monthly_tools: 3000

max_single_action_without_approval: 500

departments:

research:

goal: identify high-intent accounts

agents: [market-mapper, competitor-watcher, hiring-signal-scanner]

gtm:

goal: generate qualified pipeline

agents: [list-builder, personalization-writer, sequence-operator]

engineering:

goal: maintain internal systems and customer automations

agents: [integration-builder, qa-reviewer, deployment-operator]

governance:

humans:

- role: board

can_pause_any_agent: true

approves: [payments_over_500, production_deployments, outbound_over_1000_contacts]

audit:

log_every_tool_call: true

retain_for_days: 365Today this would be a strategy doc. Tomorrow it is runtime configuration.

That distinction matters. A strategy doc describes what people hope the company does. A runtime contract constrains what the company can actually do.

The hand-wavy part of every “AI company in a box” pitch is how the YAML actually executes. The honest answer in 2026 is that the stack already exists. Temporal provides durable workflow execution that survives crashes and resumes mid-step. LangGraph holds the cyclic cognitive state — checkpointed, time-travel-debuggable. The Model Context Protocol (MCP) standardizes how agents discover and call tools. Open Policy Agent (OPA) enforces governance as Rego rules at decision time. The org.yaml is interpreted; each department is a Temporal workflow, each policy is an OPA deny rule, each tool is an MCP server, each approval gate is a Signal the workflow waits on until a human acts.

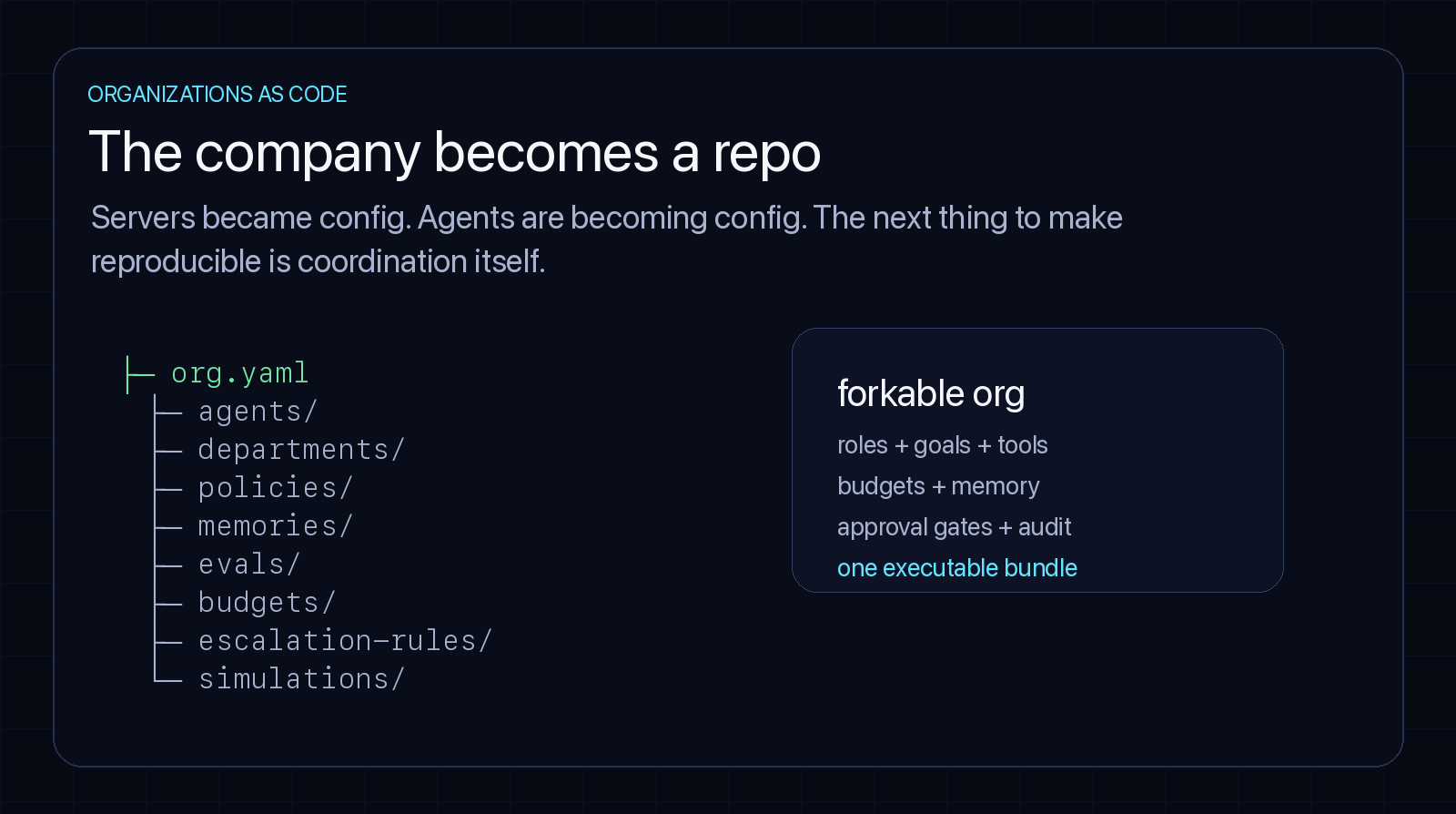

The directory layout that emerges:

| Directory | What it owns |

|---|---|

agents/ | model choices, tools, memories, permissions, schedules |

departments/ | goals, queues, ownership, scorecards |

policies/ | what requires approval, what is forbidden, what is reversible (OPA Rego) |

playbooks/ | repeatable workflows: outbound, support, QA, finance ops |

evals/ | tests for whether the org still behaves correctly |

budgets/ | token, cloud, tool, and payment limits |

escalation-rules/ | when to wake a human, which one, with what evidence |

simulations/ | sandbox runs before changing production behavior |

Raw agents are cheap and chaotic. The value is The Wrapper — the organizational shell of policies, budgets, approvals, memory boundaries, audit trails, and evals around them. Without that wrapper you do not have a company. You have a pile of interns with root access.

3. Two clocks, or the org becomes unusable

The single most useful thing I learned building agent-memory systems applies almost directly to organizations: separate the interactive clock from the background clock. If you do not, the system becomes unusable.

| Clock | Latency budget | What runs there | Why it exists |

|---|---|---|---|

| interactive | seconds to minutes | task execution, approvals, customer replies, deploy decisions | the path humans feel directly |

| background | minutes to hours | memory distillation, embedding refresh, audit review, eval runs, simulations, budget analysis | improves the org without blocking work |

The mistake is to put everything on the interactive clock. A customer should not wait twenty minutes because the support agent’s memory layer is re-embedding the corpus. A GTM agent should not block on a quarterly simulation before sending one approved email. A coding agent should not wait on a company-wide governance report before opening a PR.

The background clock is where the compounding happens — where the org notices that one workflow is burning budget, one department’s memory is stale, one approval gate is pure ceremony, one agent keeps failing the same eval, one human has become the hidden bottleneck.

Freshness and richness should not share the same latency budget. This is an operating principle for agent-native companies, not an optimization. I argued the same thing about system-prompt architecture in The 14K Token Debt — what runs at boot vs. what runs in the loop is the most consequential decision in the stack. The org-level version is the same shape.

4. The useful unit is a deployable business capability

The first serious use case is not “create a unicorn in one click.” That is the wrong fantasy.

The useful unit is smaller: a deployable business capability. A support desk. A research cell. A GTM motion. A compliance monitor. A code-migration squad. A grant-writing operation. A finance ops back office.

Each one has the same shape:

mission

-> roles

-> tools

-> memory

-> workflow queues

-> approval gates

-> evals

-> audit trail

-> budget limitsThat bundle is the thing you can fork.

This is why “organizations as code” feels more accurate to me than “AI company in a box.” A company-in-a-box sounds like a toy. An org bundle sounds like infrastructure.

And infrastructure is the right mental model. You do not trust a production system because it sounds smart in a demo. You trust it because it has boundaries, tests, logs, rollback, and somebody accountable for the blast radius.

Same here.

5. What forks cleanly. What doesn’t.

Forkability is the main event. When software became forkable, experimentation exploded. When organizations become forkable, business-model search changes — fork the same agency into healthcare, logistics, legal, insurance; branch a GTM motion to test two sales plays; fork into a new geography by swapping data residency, payment rails, language, local vendors.

But the breezy “fork the org four times” pitch hides the part that breaks. Some artifacts fork cleanly. Some don’t.

| Artifact | Forks cleanly? | Why |

|---|---|---|

| Policies, budgets, escalation rules | Yes | Pure config; no domain entanglement |

| Playbooks, workflows, eval suites | Mostly | Slot in new tools; structure stays |

| Agent definitions (model, tools, prompt) | Mostly | Re-grounding the prompt is the work |

| Memory and embeddings | No | Domain-, customer-, and incident-specific |

| Vendor connectors and integrations | No | Each market has different APIs and contracts |

| Compliance and regulatory posture | No | Jurisdiction-bound; not a config swap |

| Customer trust and brand | No | Not in the repo |

The clean half is the unlock. The sticky half is what makes the founder’s job real. Forkability is partial by design — and the partial-forkability is precisely what stops the spam version from being trivial to spin up.

6. The Reverse Layoff (the lesson nobody wanted)

Anyone selling you the clean version of this story is not paying attention.

In early 2026 the market ran the experiment. A wave of companies, intoxicated by the leverage, laid off significant portions of their engineering and operations teams and replaced them with agent swarms. Then the agents hit cases nobody had documented. Financial reports broke in inexplicable ways. Production updates failed silently. Workflows degraded under load no one had simulated.

The agents could not debug undocumented legacy entanglement without human intuition acting as the glue. The same companies were soon hiring those same engineers back at premium rates — the “boomerang employee” pattern. Institutional knowledge turned out to be a largely un-computable variable.

Karpathy’s own eight-agent simulation surfaced the same lesson at a smaller scale. The agents were mechanically tenacious — they ground through syntax errors, server config, and CI without complaint. They were also remarkably bad at experimental design. One junior agent excitedly reported it had “discovered” that increasing the model’s hidden size reliably lowered the loss. Mathematically true. Scientifically vacuous. The improvement came from spending more compute, not from finding anything.

Meanwhile an MIT 2026 study identified what the field is now calling Cognitive Debt — measurable atrophy in independent analytical capacity among engineers who outsource the painful part of problem-solving to agents. Output goes up; the muscle that produces taste goes down.

The takeaway is not “AI doesn’t work.” It is the inverse:

As execution gets cheaper, the un-automatable parts — taste, judgment, scope-setting, knowing what is worth doing — become more valuable, not less. The Reverse Layoff is what happens when an org tries to skip the wrapper.

7. Due diligence becomes code review

If a company is an executable bundle, acquiring one means inspecting the bundle. Not just the financials. The operating code.

You would review:

- permissions — which agents can touch money, production, customer data, outbound, legal docs?

- memory — what does the org believe, who wrote it, when was it last verified?

- workflows — which queues drive revenue, support, finance, shipping?

- evals — what tests prove the org still works?

- budgets — where does compute spend go, and which loops can run away?

- audit logs — can you replay why a decision happened?

- human dependencies — which workflows fail if one person leaves?

That last one is the killer. A lot of companies are not really companies. They are a few heroic people holding a pile of broken processes in their heads. Organizations as code makes that visible.

It also makes the inverse visible. A small team with a clean operating repo, sharp evals, narrow permissions, clear memory boundaries, and repeatable workflows may be much more valuable than its headcount suggests.

AI-native startups already report Revenue Per Employee figures around $3.48M, against a SaaS median of $129–200K. RPE was the first obvious AI-native metric. Operational Reproducibility — the share of the company you could rebuild from the repo alone — is the better one. If 90% of your business survives the org being forked into a fresh environment, you are running infrastructure. If 30% does, you are running a story about a few people.

Worth noting: the DORA framework is collapsing under agent throughput — 66% of developers no longer trust traditional engineering metrics. Deployment frequency means nothing when one agent pushes dozens of commits an hour. The metrics will follow the unit of work, and the unit is shifting from “the team” to “the bundle.”

8. Governance is the product

A clonable organization is powerful in the same way a botnet is powerful. If you can spin up a useful org, you can spin up a harmful one. Spam companies. Scam companies. Automated litigation mills. Fake-media networks. Synthetic political operations. Companies with no moral center because nobody inside them feels responsible.

This is why governance is not a chapter at the end. It is the product.

The hard questions arrive immediately:

- Who owns an agent’s actions?

- Who can audit a forked organization?

- Can a regulator inspect org code?

- Can customers know whether they are dealing with a human, an agent, or a company with one human and ten thousand agents?

- Can payment networks, cloud providers, and model providers enforce identity at the organizational level?

- Can an organization be rate-limited?

- Can it be recalled?

Agent identity is not enough. The org needs identity. Not just an account — provenance. Who created this org. What template it was forked from. What permissions it has. Which humans are accountable. Which jurisdictions it operates in. Which models and tools it uses. What changed between this version and the last one.

Without that, organizations as code becomes organizations as malware.

The geopolitical layer is already moving. The EU’s GAIA-X effort is accelerating a federated sovereign tech stack at a rumored €20–30 billion per year, on the explicit thesis that European org code should not be bound to American hyperscalers. The U.S. is doubling down via NSF AI Institutes. The infrastructure decisions are not neutral and there will be no neutral place to host an org repo.

9. Disposable coordination — and the smallest version you can ship Monday

The cloud gave us disposable infrastructure. AI agents give us disposable labor. Organizations as code give us disposable coordination.

That is the unlock.

Once coordination becomes cheap, we will see many more tiny companies, temporary companies, single-purpose companies, forked companies, simulated companies, companies that look more like open-source projects than corporations. Some will be scams. Some will be toys. Some will be terrifying. Some will be beautiful. The direction is clear.

First we made servers programmable. Then we made workers programmable. Now we are making the organization programmable.

You do not need to wait for the platform. The smallest org-as-code artifact you can ship this week is exactly the one Karpathy shipped in March: one program.md and one scoped repository for one agent. Pick a single workflow inside your team — a weekly competitive scan, a triage queue, an SDR sequence, a deploy verification loop. Write the operating manual: scope, files in scope, files off-limits, success metric, log format, recovery protocol. Pin a model version. Set a budget cap. Add a single OPA-style rule for the one action that requires a human. Run it. Read the diff every morning.

That is the seed. Everything in this post grows from it.

The next five years are about learning how to spin up a company without losing the ability to ask whether it should exist.

Where this breaks

Three failure modes worth naming honestly.

[!WARNING] The empty-wrapper trap. A repo full of

policies/,evals/, andaudit-logs/is not a company. Without a real workflow producing real value, the wrapper is theater. The Reverse Layoff happened to companies who built the wrapper before they had earned the right to compress the workforce inside it.

[!CAUTION] Memory pollution as organizational hallucination. The most insidious failure of “Org as Code” is when stale, wrong, or agent-generated memories enter the trusted memory layer and become structural precedent for future decisions. By iteration N+10, the org is making decisions on its own past hallucinations. Memory needs aggressive provenance, expiry, and pruning — closer to a database garbage collector than a knowledge base.

[!NOTE] Semantic transferability. A heavily optimized org repo encodes the founder’s biases, blind spots, and aesthetic preferences. Forking it laterally — handing it to another founder, another vertical, another culture — guarantees friction. Distinguishing absolute organizational primitives from idiosyncratic founder preferences is the next hard problem in this space, and we do not yet have a clean answer.

[!IMPORTANT] Silent model drift breaks the org overnight. Cloud providers ship undocumented model updates that change behavior on standard benchmarks — increased hallucination rates, exacerbated multi-file laziness, sometimes both. An autonomous loop running unattended cannot tell you that yesterday’s

claude-opus-latestis not today’s. Pin specific immutable model versions at the org-config level. Treatlatestas a synonym for “production may regress without warning.”

Sharad Jain builds agentic AI pipelines in Bengaluru. He previously engineered core data infrastructure at Meta and is the founder of autoscreen.ai, a production voice-AI platform. This post is part of a series on agentic AI infrastructure — see The 14K Token Debt on system-prompt architecture and The Terminal Was the First Agent Harness on Unix primitives as agent patterns.

Research & Footnotes:

- Karpathy,

autoresearch— 630-line autonomous ML research loop (March 2026) - The New Stack: Karpathy’s 630-line script ran 50 experiments overnight

- The New Stack: Vibe coding is passé. Karpathy has a new name for the future of software.

- QUASA Connect: Karpathy’s experiment assembling an AI research team — ‘Org Engineering’

- QUASA Connect: The Great AI Reverse Layoff

- QUASA Connect: MIT Study Reveals ‘Cognitive Debt’

- byteiota: Developer Productivity Metrics Fail: 66% Don’t Trust Them

- NxCode: Agentic Engineering: Complete Guide (2026) — Stripe 1,000 PRs/week, Zapier 89% adoption, AI-native RPE

- O-mega: Karpathy Autoresearch Complete 2026 Guide — Lütke / Shopify 19% improvement

- Anthropic: Model Context Protocol specification

- Open Policy Agent: OPA documentation

- Temporal: Durable execution platform

- LangChain: LangGraph persistence

- QUASA Connect: Europe’s GAIA-X sovereign tech stack